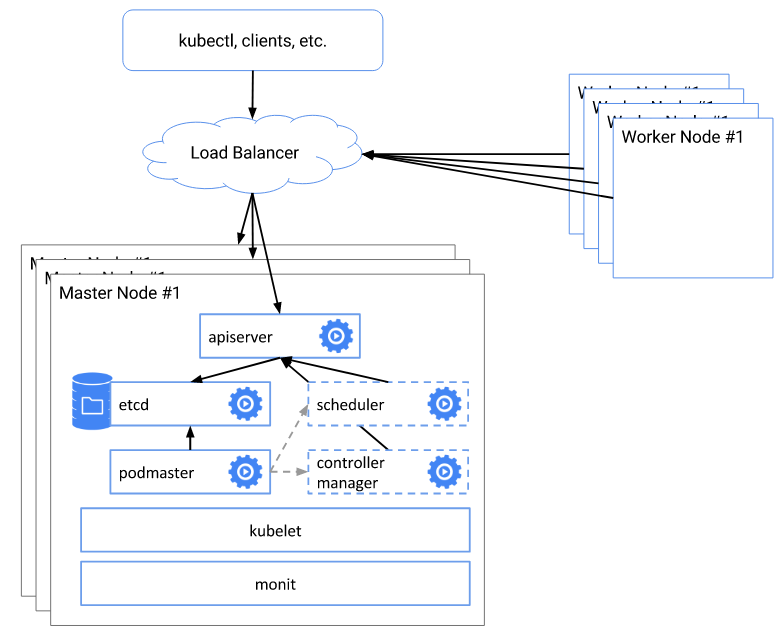

1.kubernetes 高用可架构

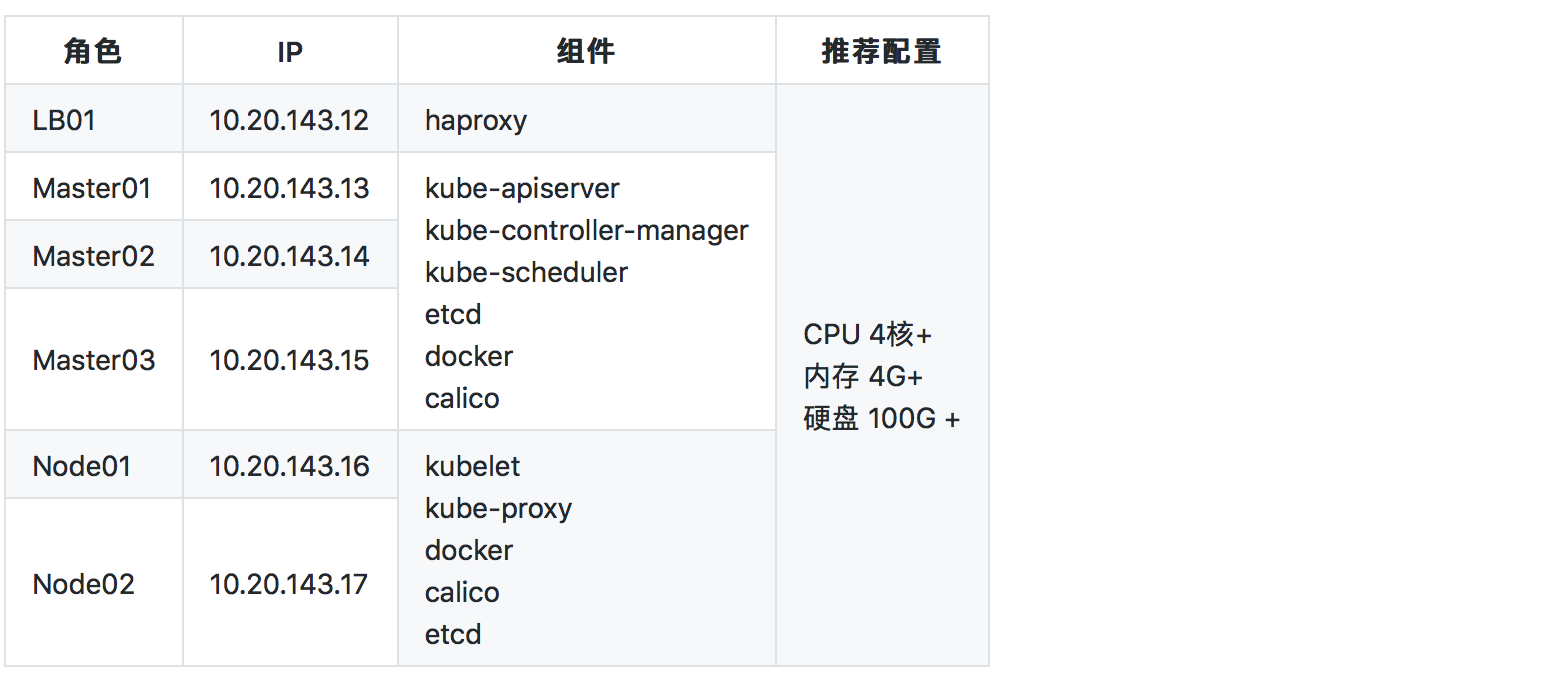

2.环境规划

3.准备工作

1. 修改主机名,kubernetes集群会识别主机名,确保主机名唯一(每台节点上)

# ${hostname}变量请替换成规划的主机名,比如ns.k8s.master01,ns.k8s.node01

$ hostnamectl set-hostname ${hostname}

2. 安装Docker(每台节点上)

#0.删除旧版dokcer,如果默认之前yum安装的1.12版本,可以这样删没装可以跳过此步

$ yum remove -y docker*

#1.安装需要的包

$ yum install -y yum-utils \

device-mapper-persistent-data \

lvm2 libtool-ltdl libseccomp

#2.添加阿里云源,

$ yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

#3.根据实际查找当前版本 (可选)

$ yum list docker-ce --showduplicates | sort -r

#4.安装17.12.0版本

$ yum -y install docker-ce-17.12.0.ce

#5.配置Dokcer启动参数

$ mkdir -p /etc/docker

$ cat <<EOF > /etc/docker/daemon.json

{

"log-opts": {

"max-size": "100m",

"max-file": "10"

},

"graph": "/opt/data/docker/"

}

EOF

#6.开启docker服务,和开机启动

$ systemctl start docker && systemctl enable docker

3. 上传安装包 ( kubernetes1.9.2.tar.gz )到每个节点 /opt 下

安装包下载链接: https://pan.baidu.com/s/1ejc484oTJaPi16fWbcX5_g 密码: snvu

3.1. 解压安装包

$ tar -zxvf /opt/kubernetes1.9.2.tar.gz -C /opt/ && cd /opt/kubernetes1.9.2 && find ./ -name '._*' -delete

3.2. 安装包介绍

├── bin # 所需要的kubernetes相关的bin文件

│ ├── cfssl # 自签证书工具

│ │ ├── cfssl

│ │ ├── cfssl-certinfo

│ │ └── cfssljson

│ ├── etcd-v3.2.12-linux-amd64.tar.gz

│ ├── kubeadm # 快速创建kubernetes集群的工具

│ ├── kubectl # kubernetes的客户端工具,用来向集群发送命令

│ └── kubelet # 负责维护容器的生命周期,同时也负责Volume(CVI)和网络(CNI)的管理

├── configuration # 所有的配置文件

│ ├── dashboard # dashboard相关配置

│ │ ├── dashboard-admin.yaml

│ │ └── kubernetes-dashboard.yaml

│ ├── haproxy # haproxy相关配置

│ │ └── haproxy.cfg

│ ├── heapster # heapster相关yaml配置

│ │ ├── influxdb

│ │ │ ├── grafana.yaml

│ │ │ ├── heapster.yaml

│ │ │ └── influxdb.yaml

│ │ └── rbac

│ │ └── heapster-rbac.yaml

│ ├── ingress # 路由配置

│ │ ├── README.md

│ │ ├── configmap.yaml

│ │ ├── default-backend.yaml

│ │ ├── namespace.yaml

│ │ ├── provider

│ │ │ └── baremetal

│ │ │ └── service-nodeport.yaml

│ │ ├── rbac.md

│ │ ├── rbac.yaml

│ │ ├── tcp-services-configmap.yaml

│ │ ├── udp-services-configmap.yaml

│ │ └── with-rbac.yaml

│ ├── kube # kubernetes自身配置

│ │ ├── 10-kubeadm.conf

│ │ ├── config # kubeadm配置

│ │ └── kubelet.service

│ ├── net # 网络相关配置

│ │ ├── calico-tls.yaml

│ │ ├── calicoctl.yaml

│ │ └── rbac.yaml

│ └── ssl # 自签证书配置

│ ├── ca-config.json

│ ├── ca-csr.json

│ └── client.json

├── image # 依赖的所有镜像包

│ └── images.tar

└── shell # 初始化脚本

└── init.sh # 初始化节点,安装bin文件,systemd配置 , 关闭防火墙 , SELINUX等

3.3. 节点初始化 (所有节点)

$ cd /opt/kubernetes1.9.2/shell && sh init.sh

4.集群部署

1. 自签TLS证书

1.1. 安装cfssl , cfssljson , cfssl-certinfo (在三台Master上执行)

$ mv /opt/kubernetes1.9.2/bin/cfssl/cfssl* /usr/local/bin/

$ chmod +x /usr/local/bin/cfssl*

1.2. 生成ca证书 只要在Master01上执行 (ca-key.pem,ca.pem,ca.csr)

$ cd /opt/kubernetes1.9.2/configuration/ssl

$ cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

1.3. 生成client证书 只要在Master01上执行 (client-key.pem,client.pem,client.csr)

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=client client.json | cfssljson -bare client

1.4. 创建证书存放目录,设置PEER_NAME和PRIVATE_IP环境环境变量 (在三台Master上执行)

$ mkdir -p /etc/kubernetes/pki/etcd

# 注意下面eth0是你实际网卡的名字,有可能是eth1之类的。用ip addr查看。

$ export PEER_NAME=$(hostname)

$ export PRIVATE_IP=$(ip addr show eth0 | grep -Po 'inet \K[\d.]+')

1.5. 在Master01上传输证书到Master02,Master03

$ mv ca.pem ca-key.pem client.pem client-key.pem ca-config.json /etc/kubernetes/pki/etcd/

$ scp /etc/kubernetes/pki/etcd/* root@<master02-ipaddress>:/etc/kubernetes/pki/etcd/

$ scp /etc/kubernetes/pki/etcd/* root@<master03-ipaddress>:/etc/kubernetes/pki/etcd/

1.6 生成 peer.pem, peer-key.pem, server.pem, server-key.pem (在三台Master上执行)

$ cd /etc/kubernetes/pki/etcd

$ cfssl print-defaults csr > config.json

$ sed -i '0,/CN/{s/example\.net/'"$PEER_NAME"'/}' config.json

$ sed -i 's/www\.example\.net/'"$PRIVATE_IP"'/' config.json

$ sed -i 's/example\.net/'"$PEER_NAME"'/' config.json

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=server config.json | cfssljson -bare server

$ cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=peer config.json | cfssljson -bare peer

2. 安装etcd集群 (在三台Master上执行)

2.1 安装etcd

$ tar -zxvf /opt/kubernetes1.9.2/bin/etcd-v3.2.12-linux-amd64.tar.gz --strip-components=1 -C /usr/local/bin/

2.2 生成etcd的环境文件

$ touch /etc/etcd.env

$ echo "PEER_NAME=$PEER_NAME" >> /etc/etcd.env

$ echo "PRIVATE_IP=$PRIVATE_IP" >> /etc/etcd.env

2.3 创建systemd的配置文件

$ export ETCD0_IP=10.20.143.13

$ export ETCD1_IP=10.20.143.14

$ export ETCD2_IP=10.20.143.15

$ export ETCD0_NAME=k8s-master01

$ export ETCD1_NAME=k8s-master02

$ export ETCD2_NAME=k8s-master03

$ cat >/etc/systemd/system/etcd.service <<EOF

[Unit]

Description=etcd

Documentation=https://github.com/coreos/etcd

Conflicts=etcd.service

Conflicts=etcd2.service

[Service]

EnvironmentFile=/etc/etcd.env

Type=notify

Restart=always

RestartSec=5s

LimitNOFILE=40000

TimeoutStartSec=0

ExecStart=/usr/local/bin/etcd --name ${PEER_NAME} \

--data-dir /var/lib/etcd \

--listen-client-urls https://${PRIVATE_IP}:2379 \

--advertise-client-urls https://${PRIVATE_IP}:2379 \

--listen-peer-urls https://${PRIVATE_IP}:2380 \

--initial-advertise-peer-urls https://${PRIVATE_IP}:2380 \

--cert-file=/etc/kubernetes/pki/etcd/server.pem \

--key-file=/etc/kubernetes/pki/etcd/server-key.pem \

--client-cert-auth \

--trusted-ca-file=/etc/kubernetes/pki/etcd/ca.pem \

--peer-cert-file=/etc/kubernetes/pki/etcd/peer.pem \

--peer-key-file=/etc/kubernetes/pki/etcd/peer-key.pem \

--peer-client-cert-auth \

--peer-trusted-ca-file=/etc/kubernetes/pki/etcd/ca.pem \

--initial-cluster ${ETCD0_NAME}=https://${ETCD0_IP}:2380,${ETCD1_NAME}=https://${ETCD1_IP}:2380,${ETCD2_NAME}=https://${ETCD2_IP}:2380 \

--initial-cluster-token my-etcd-token \

--initial-cluster-state new

[Install]

WantedBy=multi-user.target

EOF

2.4. 启动etcd集群

$ systemctl daemon-reload

$ systemctl start etcd

$ systemctl enable etcd

2.4. 验证集群状态

$ etcdctl --cert-file=/etc/kubernetes/pki/etcd/server.pem --ca-file=/etc/kubernetes/pki/etcd/ca.pem --key-file=/etc/kubernetes/pki/etcd/server-key.pem --endpoints=https://10.20.143.13:2379,https://10.20.143.14:2379,https://10.20.143.15:2379 member list

# 输出以下类似结果,集群安装成功

6833d3ff7f25e53c: name=k8s.test.master03 peerURLs=https://10.20.143.15:2380 clientURLs=https://10.20.143.15:2379 isLeader=false

d715f6e539919304: name=k8s.test.master02 peerURLs=https://10.20.143.14:2380 clientURLs=https://10.20.143.14:2379 isLeader=false

e2c6d0f2545d5d78: name=k8s.test.master01 peerURLs=https://10.20.143.13:2380 clientURLs=https://10.20.143.13:2379 isLeader=true

3. 创建kubernetes集群

3.1. kubeadm配置

修改配置 /opt/kubernetes1.9.2/configuration/kube/config 文件

apiServerCertSANs #此处填所有的masterip和lbip和其它你可能需要通过它访问apiserver的地址和域名或者主机名等

apiVersion: kubeadm.k8s.io/v1alpha1

kind: MasterConfiguration

apiServerCertSANs:

- 10.20.143.12

- 10.20.143.13

- 10.20.143.14

- 10.20.143.15

- k8s-master01

- k8s-master02

- k8s-master03

- k8s-node01

- k8s-node02

etcd:

endpoints:

- https://10.20.143.13:2379

- https://10.20.143.14:2379

- https://10.20.143.15:2379

caFile: /etc/kubernetes/pki/etcd/ca.pem

certFile: /etc/kubernetes/pki/etcd/client.pem

keyFile: /etc/kubernetes/pki/etcd/client-key.pem

apiServerExtraArgs:

apiserver-count: 3

networking:

podSubnet: 192.168.0.0/16

kubernetesVersion: v1.9.2

featureGates:

CoreDNS: true

3.2 运行kubeadm

$ kubeadm init --config=/opt/kubernetes1.9.2/configuration/kube/config

$ mkdir ~/.kube && cp /etc/kubernetes/admin.conf ~/.kube/config

牢记生成的 kubeadm join 命令

kubeadm join --token c97226.dc9b3c8ab883b5cb 10.20.143.13:6443 --discovery-token-ca-cert-hash sha256:70e980b465032f712c06fe9ccecb116c7b2dbd4f682edf88e6627324b583a9d0

3.3 启动多个Master

在master1上拷贝相关配置文件到master02,master03上

$ scp /etc/kubernetes/pki/* root@10.20.143.14:/etc/kubernetes/pki/

$ scp /etc/kubernetes/pki/* root@10.20.143.15:/etc/kubernetes/pki/

$ scp /opt/kubernetes1.9.2/configuration/kube/config root@10.20.143.14:/root/

$ scp /opt/kubernetes1.9.2/configuration/kube/config root@10.20.143.15:/root/

登陆master02,master03执行以下相同命令

# 删除pki目录下的apiserver.crt 和 apiserver.key文件

$ cd /etc/kubernetes/pki/

$ rm -rf apiserver.crt apiserver.key

# 创建master02,master03

$ kubeadm init --config ~/config

验证master集群

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s.test.master01 Ready master 2d v1.9.2

k8s.test.master02 Ready master 2d v1.9.2

k8s.test.master03 Ready master 2d v1.9.2

3.4 启动loadbalance (在loadbalance节点上执行)

3.4.1 修改配置文件

$ cat /opt/kubernetes1.9.2/configuration/haproxy/haproxy.cfg

global

daemon

log 127.0.0.1 local0

log 127.0.0.1 local1 notice

maxconn 4096

defaults

log global

retries 3

maxconn 2000

timeout connect 5s

timeout client 50s

timeout server 50s

frontend k8s

bind *:6444 #安装包中的配置文件多了一个 冒号: 注意修改!!!

mode tcp

default_backend k8s-backend

backend k8s-backend

balance roundrobin

mode tcp

#下面三个ip替换成三个你自己master的地址

server k8s-0 10.20.143.13:6443 check

server k8s-1 10.20.143.14:6443 check

server k8s-2 10.20.143.15:6443 check

3.4.2 启动haproxy

$ mkdir /etc/haproxy

$ cp /opt/kubernetes1.9.2/configuration/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg

$ docker run --restart always --net=host -v /etc/haproxy:/usr/local/etc/haproxy --name k8s-haproxy -d haproxy:1.7

3.4.3 修改kubeproxy配置(在master01上)

$ kubectl -n kube-system edit configmap kube-proxy

#找到文件的这一块,第七行server 有个ip地址

apiVersion: v1

kind: Config

clusters:

- cluster:

certificate-authority: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

server: https://10.230.204.151:6443 #修改为 LoadBalanceIP:6444

name: default

contexts:

- context:

cluster: default

namespace: default

user: default

name: default

current-context: default

users:

- name: default

user:

tokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

3.5 Join Node 节点

kubeadm join

$ kubeadm join --token <token> 10.20.143.13:6443 --discovery-token-ca-cert-hash sha256:<hash>

修改node节点kubelet配置

$ vim /etc/kubernetes/kubelet.conf

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: xxxxxx #此处省略几百字符

server: https://10.20.143.12:6444 #修改这里为LB:6444,

name: default-cluster

contexts:

- context:

cluster: default-cluster

namespace: default

user: default-auth

name: default-context

current-context: default-context

$ systemctl restart kubelet

3.6 创建Calico CNI网络 (在master01上)

配置calico网络需要的TLS calico-etcd-secrets

$ cd /opt/kubernetes1.9.2/configuration/net

$ sed -i "s/\$ETCDCA/`cat /etc/kubernetes/pki/etcd/ca.pem | base64 | tr -d '\n'`/" calico-tls.yaml

$ sed -i "s/\$ETCDCERT/`cat /etc/kubernetes/pki/etcd/server.pem | base64 | tr -d '\n'`/" calico-tls.yaml

$ sed -i "s/\$ETCDKYE/`cat /etc/kubernetes/pki/etcd/server-key.pem | base64 | tr -d '\n'`/" calico-tls.yaml

修改calico-tls.yaml中 etcd_endpoints 为上面装的etcd集群地址

# Calico Version v3.0.4

# https://docs.projectcalico.org/v3.0/releases#v3.0.4

# This manifest includes the following component versions:

# calico/node:v3.0.4

# calico/cni:v2.0.3

# calico/kube-controllers:v2.0.2

# This ConfigMap is used to configure a self-hosted Calico installation.

kind: ConfigMap

apiVersion: v1

metadata:

name: calico-config

namespace: kube-system

data:

# Configure this with the location of your etcd cluster.

etcd_endpoints: "https://127.0.0.1:2379" #改成正确的etcd集群地址"

# Configure the Calico backend to use.

calico_backend: "bird"

# The CNI network configuration to install on each node.

安装网络

$ kubectl apply -f rbac.yaml

$ kubectl apply -f calico-tls.yaml

3.7 安装监控和WEB UI (在master01上)

$ cd /opt/kubernetes1.9.2/configuration

$ kubectl apply -f heapster/influxdb

$ kubectl apply -f heapster/rbac

$ kubectl apply -f dashboard

Web UI 访问地址: https://:32000

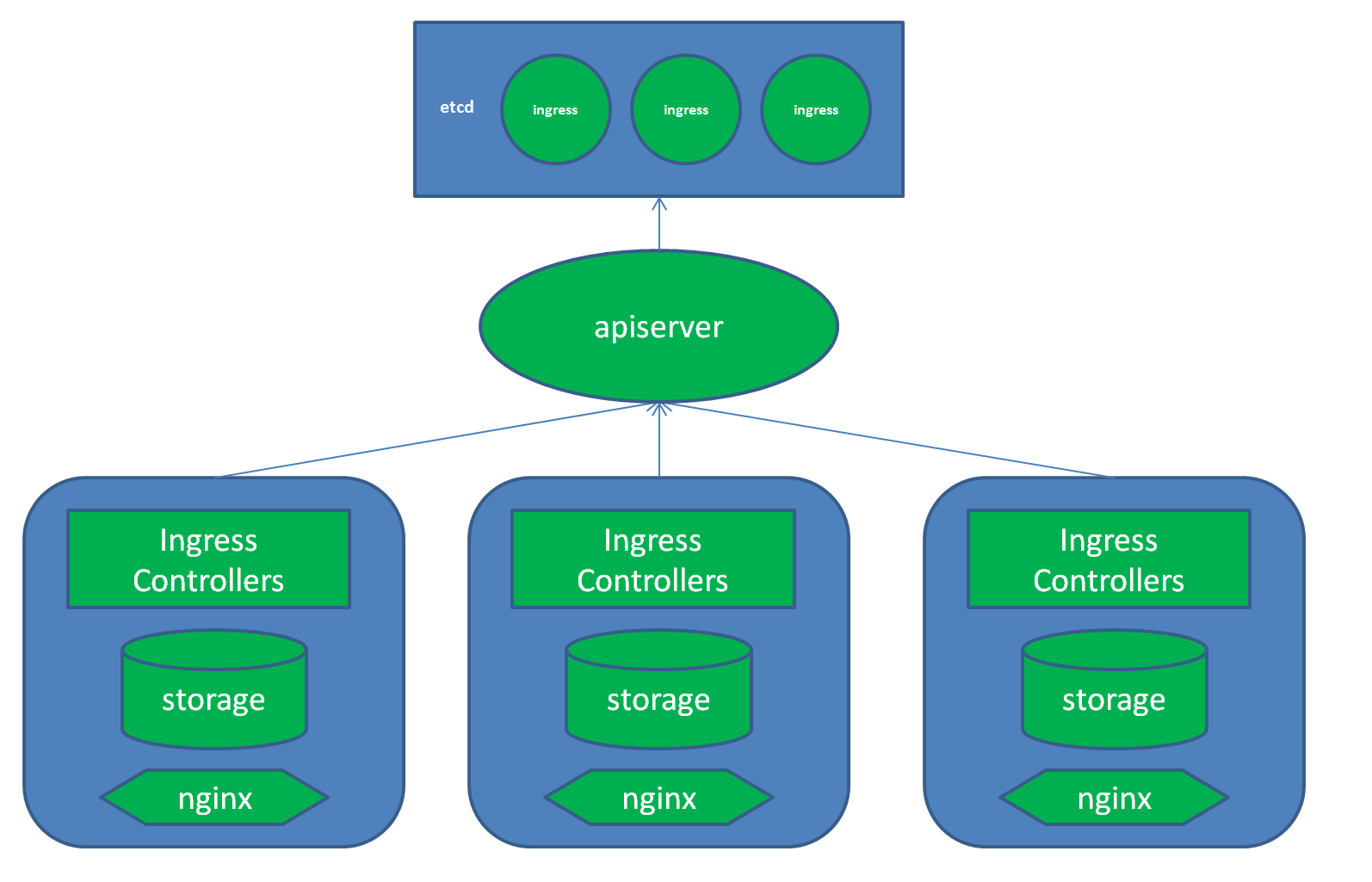

4.部署Ingress

1. 架构图

2. 创建 ingress-nginx

$ cd /opt/kubernetes1.9.2/configuration/ingress

$ kubectl apply -f namespace.yaml

$ kubectl apply -f default-backend.yaml

$ kubectl apply -f configmap.yaml

$ kubectl apply -f tcp-services-configmap.yaml

$ kubectl apply -f udp-services-configmap.yaml

$ kubectl apply -f rbac.yaml

$ kubectl apply -f with-rbac.yaml

$ cd /opt/kubernetes1.9.2/configuration/ingress/provider/baremetal

$ kubectl apply -f service-nodeport.yaml

$ kubectl get svc -n ingress-nginx

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

default-http-backend ClusterIP 10.109.228.128 <none> 80/TCP 2h

ingress-nginx NodePort 10.97.114.177 <none> 80:32358/TCP,443:31164/TCP 2h

3. 测试验证

$ kubectl run --image=nginx nginx-web01

$ kubectl expose deployment nginx-web01 --port=80

$ kubectl run --image=nginx nginx-web02

$ kubectl expose deployment nginx-web02 --port=80

$ cat <<EOF > nginx-web-test.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ingress-http-test

spec:

rules:

- host: web01.test.com

http:

paths:

- backend:

serviceName: nginx-web01

servicePort: 80

- host: web02.test.com

http:

paths:

- backend:

serviceName: nginx-web02

servicePort: 80

EOF

$ kubectl apply -f nginx-web-test.yaml

LB配置,将域名解析至lb或者绑定hosts,后访问域名即可.

frontend http-test

bind *:80

mode tcp

default_backend http-test

backend http-test

balance roundrobin

mode tcp

server k8s-0 10.20.143.13:32358 check

server k8s-1 10.20.143.14:32358 check

server k8s-2 10.20.143.15:32358 check

Done.

参考:

https://kubernetes.io/docs/setup/independent/high-availability/

https://segmentfault.com/a/1190000013262609?spm=5176.730006-cmxz025618.102.7.HmMX5q

https://segmentfault.com/a/1190000013611571